Are Hackers Speeding on the Information Highway?

(or “Has our Security Crashed?”)

I just came back from a discussion with our national CERT and took some thoughts back home:

(TL;DR section at the end)

I have the impression, that some of our security mechanisms, which seemed so sturdy and and healthy until recently, are turning soft and weak in our hands. The developments in the last few years were definitely on the fast lane, breaking all speed limits and no data-highway patrol was there to stop them from speeding. The traditional approach to define security mechanisms (let’s call them technical controls) doesn’t really seem right to me any more:

Raise the bar to a level, where the remaining risk is acceptable for the next “X” years, assuming that technology advances at a certain rate.

(Use a reasonable number of years for “X” and assume that the technology advances with Moore’s Law)

Some things went different in the last few years -let’s say around 2007/2008- which is far beyond Moore:

- Access to high performance computing resources is no longer limited to large organizations

- Distributed attacks came apparently as a surprise

- Non-disclosure information and details of standards became available to a large and in some cases a malicious audience

- Advances are not only made in hardware, where Moore’s Law is still valid, but also in the algorithmic field which gives an extra boost, maybe like a nitrous-oxide injection if I may stick to my analogy above

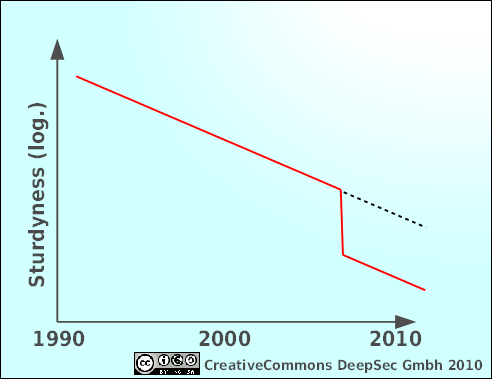

This is a simple illustration about how I perceive our situation. It’s not to scale and it’s not backed by a vast amount of statistical data, so don’t use it for your thesis, diploma work or anything like that.

This gap or drop or “fast-forward” -call it whatever you like- makes me a little bit concerned because security wise it throws us a couple of years into the future, where our current technical controls seem to be outdated and atavistic like a Roman Ballista on a modern battle field.

Access to high performance computing

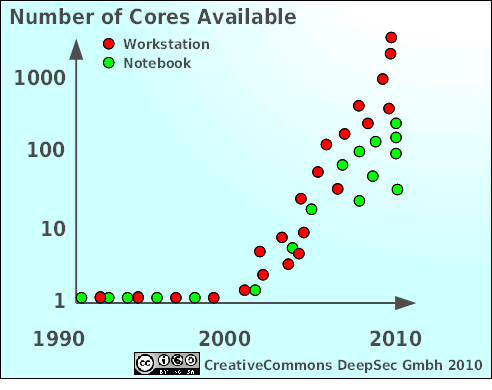

It’s not really the classical mainframe or vector cluster which now enters private homes and in some way even nurseries but our current generation of graphic cards. The number of independent computing cores available for a single user rises much faster than any prediction or assumption. Additionally the availability of programming environments like nVidia’s CUDA-Framework, or the OpenCL environment makes these cores easily available for number crunching at home. Algorithms and open source code is freely accessible for several brute-force tasks like password brute forcing, creation of rainbow tables and producing hash-collisions.

Again, this is a rough estimate, not very precise, not much to scale (I could be off by several pixels). Just a little illustration to give you the picture: in 2010 we have reached 3200 cores screaming along at 800 MHz. A typical high-end gaming PC of 2010 could have entered the TOP500 list a couple of years ago. For example, ASCI Red, leader of the pack back in 2000 had an estimated peak performance of around 2380 GFLOPs which could be outperformed today by a smaller LAN-party in somebody’s living room:

The ATI Radeon HD5970 is rated at 4640 GFLOPs at single, resp. 928 GFLOPs at double precision. Yes, that’s roughly around 5 resp. 1 Tera FLOPs. For smoothly running computer games. And cracking our security algorithms.

Distributed Attacks

Another wrong assumption was a “single adversary”. In the last years we have seen many projects of distributed and cooperative computing like SETI@home, Folding@home, prime factorization etc… to use thousands of academic and private computers in parallel to cope with unbelievably complex computational tasks. Something a single computer or cluster couldn’t have done in thousands of years.

Here is news for those who haven’t heard about it yet: there are massive distributed projects going on, some public and in open view, some behind closed doors, within conspiratorial circles whose aims and goals lie in the dark. Two examples for public projects are freerainbowtables.com and the distributed cracking of the GSM-encryption A5/1, led by Karsten Nohl one of the leading security experts in the aera of GSM security. But what about all those projects lurking in the dark? Can we even estimate the risk-potential?

Non-Disclosure Information

One field that was struck hard was mobile communication. Not only the mentioned rainbow-tables for decrypting A5/1, which is still used for many connections, but also the free availability of all the technical details, standards, protocols and security mechanisms used in mobile communications like GSM, GPRS, EDGE, UMTS and LTE changes the possible risks. The 3GPP initiative made this possible, publishing all relevant standards and specifications free for everyone, regardless of any possible patent restrictions or the like.

The open sourced design files for building your own BTS (the “GSM Base Station”) and just recently the Um Interface for a Mobile Station (vulgo “cellular”) allows any skilled person to build everything needed (including the HF radio!) for creating your own IMSI-Catcher on a shoe-string budget. The leaking of the specifications of the Calypso chip-set, once under strict non-disclosure, brings even a used cellular phone for some 20 or 30 bucks into the game of GSM-insecurity.

Open source projects like OpenBTS, OsmocomBB etc. give you now the possibility to build everything on your own, scrutinize implementations and test your own equipment for vulnerabilities but they also create threat-scenarios in totally unexpected areas:

- “Private” IMSI-Catchers, uncontrolled by legal bodies to intercept communications

- IMSI-Catcher-Catcher, allowing individuals under observation to evade legal investigation

- Malicious call setup to high-priced premium services to rip off innocent bystanders

- Injecting or supressing content (classical man-in-the-middle)

- …whatever your fantasy brings up

Algorithmic Advances

Last not least also our coders have not been lazy. I Just remind you of the creation of a seemingly legitimate CA certificate at the Chaos Communication Congress, Berlin in December 2008 attacking directly the MD5 hash followed by feasible attacks against the SHA1 hash less than a year later. Although the announcement of a Differential Path for SHA-1 with a complexity of O(2^52) has been withdrawn later by the authors Sha-1 is moving a little bit into shallow waters. At least parts of a SHA-1 secured message can be chosen through a collision. Maybe enough to spoof a certificate, I didn’t have enough time to go into that detail.

Also AES received a little dent after finding out, that the key schedule of AES256 (curiously not the AES128) has some weaknesses, lowering the safety margin of AES256 significantly compared to what we believed earlier.

Other advances have been made in optimizing code, more specifically optimizing GPU-code (see above) to boost the current implementation of rainbow table generation by getting rid of some costly function in the previous implementations.

So what is left over?

Still enough to survive for a little while. Don’t panic (always a good advice) and look carefully at the controls which you have implemented:

- Physical controls (exactly what it sounds like: locks and doors and safes and walls and cameras etc…)

- Technical controls (protocols, algorithms, authentication, authorization, integrity mechanisms etc..)

- Administrative controls (security policy, incident response, monitoring, security awarenes etc…)

And build multiple defenses, avoiding single points of failure, aggregating multiple independent controls for high value assets.

<TooLong;Didn’tRead>

- Maybe our current security technology can’t withstand the latest developments in hardware, distributed computing, information disclosure and optimized algorithms for too long.

- Don’t panic, a robust and well organized security organization can compensate technological weaknesses.

</TooLong;dindn’tRead>

Comments, Feedback etc is welcome, just be polite. I maybe wrong and I may misunderstand some things, but I’m pretty sure that many people have too much trust in technology and underestimate changes in our threat-landscape.

Hope to see some of our readers at the DeepSec in November!

Michael “MiKa” Kafka