Translated Article: EU Control Committee Blocks Regulation on Chat Surveillance

EU-Kontrollausschuss blockt Verordnung zur Chat-Überwachung by Erich Moechel for fm4.orf.at

[We have translated this article, because we have criticised client side scanning and introducing backdoors to circumvent encryption in past articles. Erich Möchel has an update on the current EU initiative to make encryption useless.].

A leaked report from the Commission’s control committee shows that officials from the Commission’s interior department have not presented a legally compliant draft in two years.

The publication of the ordinance on the automated monitoring of chats, which was announced at the end of March, has already been postponed again. This ordinance, ostensibly aimed at combating child abuse, is now 18 months behind schedule. A recent leak now shows the reason for this series of postponements.

The officials responsible for the Commission’s draft could not come up with a text that meets the minimum requirements of the EU Regulatory Scrutiny Board. This committee, which accompanies all new regulations, worded its opinion on the draft diplomatically, but the content is devastating.

Translation from the diplomatic lingo

The draft was “substantially changed and improved in many areas” and is therefore generally assessed positively, it says in the introduction. However, there are reservations about “significant deficiencies” that the Committee believes will be addressed. The four most important objections in the summary are formulated very cautiously, but a “significant deficiency” is a reason for exclusion. The Committee for Regulatory Scrutiny of the EU Commission has thus determined that the Directorate General of the Commission responsible for the draft has not complied with the legal requirements of the Union.

The second objection relates to the technical options listed in the draft to discover previously unknown material of child abuse and so-called “grooming” by adults. It is therefore clear that this draft from Commissioner Ylva Johansson’s home affairs department requires comprehensive scanning of all text chats, including images and videos, for child abuse material. In its report, the committee refers to the ban on a general obligation to monitor communications of all kinds, which applies in the EU. Unknown child abuse material, on the other hand, can only be discovered automatically by the algorithms of an AI program rasterising the entire mass of data and calculating the probabilities of which images, videos or conversations refer to child abuse.

Under initial suspicion by algorithms

AI applications in the field of law enforcement are classified by the Commission itself as “high-risk applications” for which significant requirements are foreseen in the upcoming EU AI Directive. The high error rates – namely false hits – of this technology, which is still in its infancy, are now well known in the Commission. Only word has apparently not yet reached Ylva Johansson’s interior department. The draft envisages that from a certain number of such “hits” the relevant data records are automatically passed on to the criminal prosecutor. That is, an algorithm calculates the probability of whether this material justifies an initial suspicion.

Apparently, several “solutions” are mentioned in the draft, with which method the chats should be tapped, one of them must be described as preferred. In point three, the Committee criticizes “that the efficiency and balance of the preferred solution is not sufficiently justified”. As is well known, this “preferred solution” comprises scanning the content before establishing an end-to-end encrypted connection on the smartphone. A dozen of the world’s best-known academic cryptographers tore this approach of “client side scanning” to pieces last fall (see this link). However, the Regulatory Scrutiny Committee does not reveal how these “significant deficiencies” could be eliminated if they affect the entire approach to this regulation.

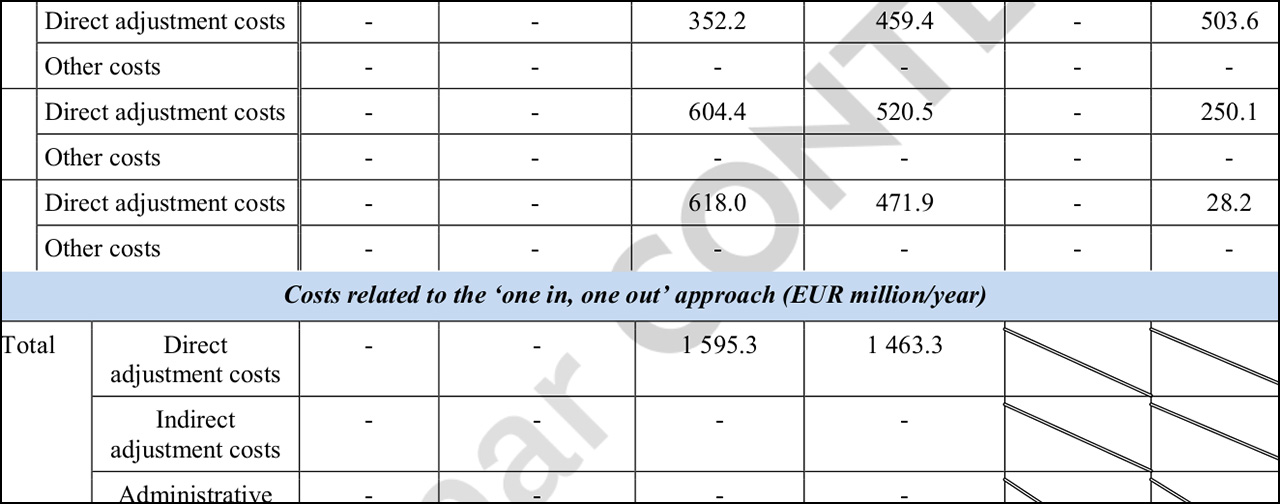

This graphic shows the cost calculation. There are no costs for users. These are the two columns on the left. For industry, 1.5953 one-off costs and 1.4633 billion euros are calculated annually. On the far right are the running costs for the EU budget. The individual items together result in around 820 million euros per year. The Regulatory Scrutiny Board is an independent body within the Commission to ensure the quality of legislative processes through early stage impact assessments and evaluations.

Fantasy instead of numbers, including cover-up

And then there are ongoing and one-off costs that are not described “accurately enough” for the committee. This concerns the planned establishment of an EU center against child abuse, through which these scans by Apple, WhatsApp and Co on their customers’ smartphones and PCs are to run. This is supposedly done to prevent abuse by operators of chat and messenger services during scanning. The table shows a veritable number swindle that is planned in the name of “reducing bureaucracy”.

For example, the running costs for industry and authorities that arise annually through the individual measures and operation of the abuse center should not fall under the usual detailed cost/benefit calculations and quality controls, but should be replaced by a “one in one out” procedure (see screenshot ), in which, for example, the running costs for the Commission are no longer shown individually and are therefore not verifiable. So nobody wants to know how the 820 million euros in costs that are due from the EU budget every year come about. But apparently, it is already known that the comprehensive monitoring of chats would ensure “crime reduction” and thus bring annual savings of around 3.488 billion euros.

How it (not) goes on

The new publication date is now April 27th. A regulation that violates applicable EU law in every respect in terms of approach and methods should therefore be trimmed to conform with the law within one month. Not even the responsible commissioner and initiator Ylva Johansson can believe in that anymore.